---

license: apache-2.0

language:

- en

tag: text-generation

tags:

- medical

datasets:

- Open-Orca/OpenOrca

- pubmed

- medmcqa

- maximegmd/medqa_alpaca_format

base_model: mistralai/Mistral-7B-v0.1

metrics:

- accuracy

---

# Model Card for Internist.ai 7b

Internist.ai 7b is a medical domain large language model trained by medical doctors to demonstrate the benefits of a **physician-in-the-loop** approach. The training data was carefully curated by medical doctors to ensure clinical relevance and required quality for clinical practice.

**With this 7b model we release the first 7b model to score above the 60% pass threshold on MedQA (USMLE) and outperfoms models of similar size accross most medical evaluations.**

This model serves as a proof of concept and larger models trained on a larger corpus of medical literature are planned. Do not hesitate to reach out to us if you would like to sponsor some compute to speed up this training.

# Model Card for Internist.ai 7b

Internist.ai 7b is a medical domain large language model trained by medical doctors to demonstrate the benefits of a **physician-in-the-loop** approach. The training data was carefully curated by medical doctors to ensure clinical relevance and required quality for clinical practice.

**With this 7b model we release the first 7b model to score above the 60% pass threshold on MedQA (USMLE) and outperfoms models of similar size accross most medical evaluations.**

This model serves as a proof of concept and larger models trained on a larger corpus of medical literature are planned. Do not hesitate to reach out to us if you would like to sponsor some compute to speed up this training.

Advisory Notice

The model was designed by medical doctors for medical doctors and did not undergo specific training to address potential security issues when used by non medical professionals.

We highly recommend against the use of this model in a live environment without extensive evaluation through prospective clinical trials and additional training to meet the required safety levels.

## Model Details

- **Developed by:** [UCLouvain](https://uclouvain.be/) and [Cliniques Universitaires Saint-Luc](https://saintluc.be/)

- **Language(s):** English (mainly)

- **Model License:** [APACHE 2.0 LICENSE](LICENSE)

- **Code License:** [APACHE 2.0 LICENSE](LICENSE)

- **Continue-pretrained from model:** [Mistral-7B-v0.1](https://huggingface.co/mistralai/Mistral-7B-v0.1)

- **Context length:** 4096 tokens

- **Knowledge Cutoff:** October 2023

### Model Sources

- **Trainer:** [Axolotl](https://github.com/OpenAccess-AI-Collective/axolotl)

- **Paper:** [Impact of High-Quality, Mixed-Domain Data on the Performance of Medical Language Models](https://doi.org/10.1093/jamia/ocae120)

## Uses

This model was trained to demonstrate the benefit of using high quality and relevant medical literature as well as general data to retain capabilities in other domains. Therefore the model was trained for any specific use and did not benefit from additional instruction tuning to ensure safety.

The model in its current state can be useful for medical professionals as an assistant, be it for clinical decision support or documentation. We do not recommend the use of this model by non professionals who may not be able to notice errors.

We recommend additional task specific training and safety evaluation before using the model in a real-world setting.

### Format

The model uses the Alpaca format, it is available as a chat template:

```python

from transformers import AutoModelForCausalLM, AutoTokenizer

device = "cuda" # the device to load the model onto

model = AutoModelForCausalLM.from_pretrained("internistai/base-7b-v0.2")

tokenizer = AutoTokenizer.from_pretrained("internistai/base-7b-v0.2")

messages = [

{"role": "user", "content": "Describe the anatomy of nutcracker syndrome"},

]

encodeds = tokenizer.apply_chat_template(messages, add_generation_prompt=True ,return_tensors="pt")

model_inputs = encodeds.to(device)

model.to(device)

generated_ids = model.generate(model_inputs, max_new_tokens=1000, do_sample=True)

decoded = tokenizer.batch_decode(generated_ids)

print(decoded[0])

```

### Out-of-Scope Use

We do not recommend using this model for natural language generation in a production environment, finetuned or otherwise.

## Professional Evaluation

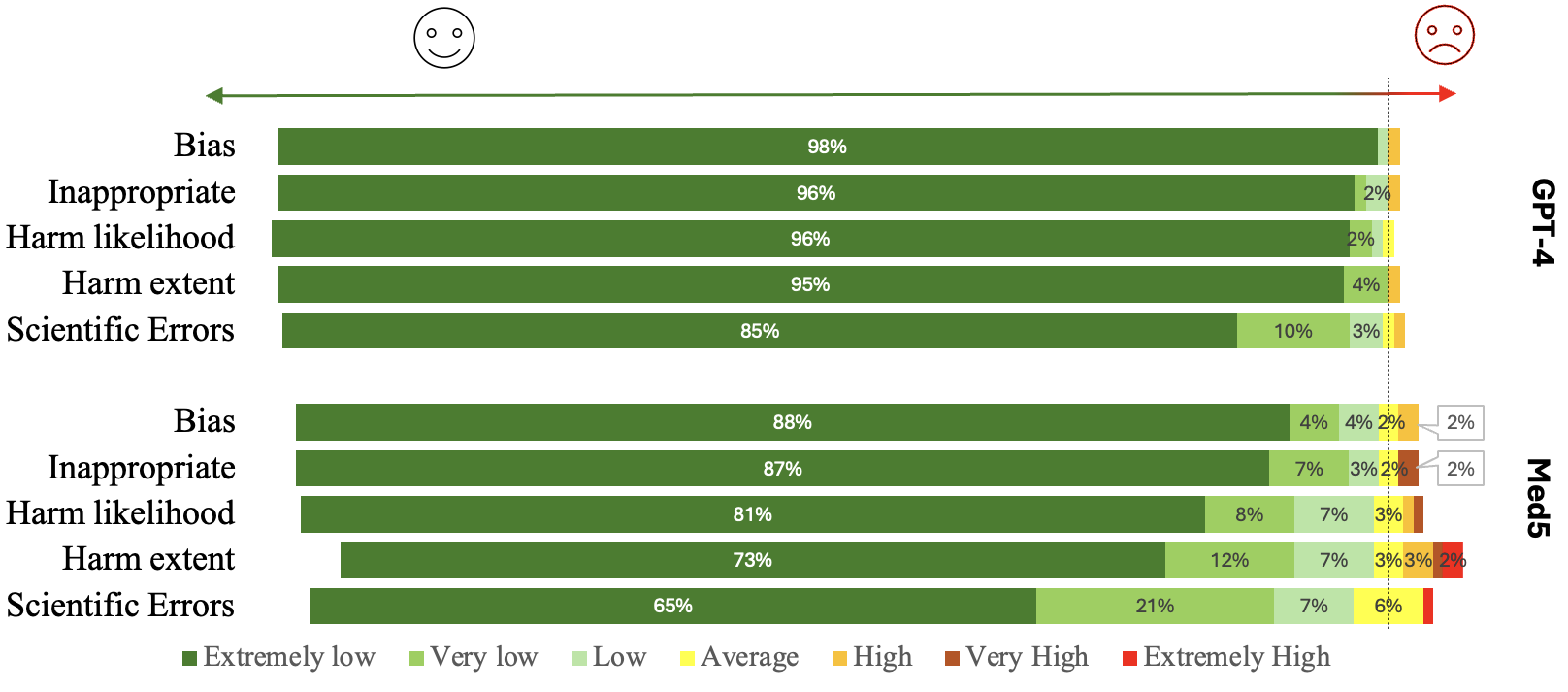

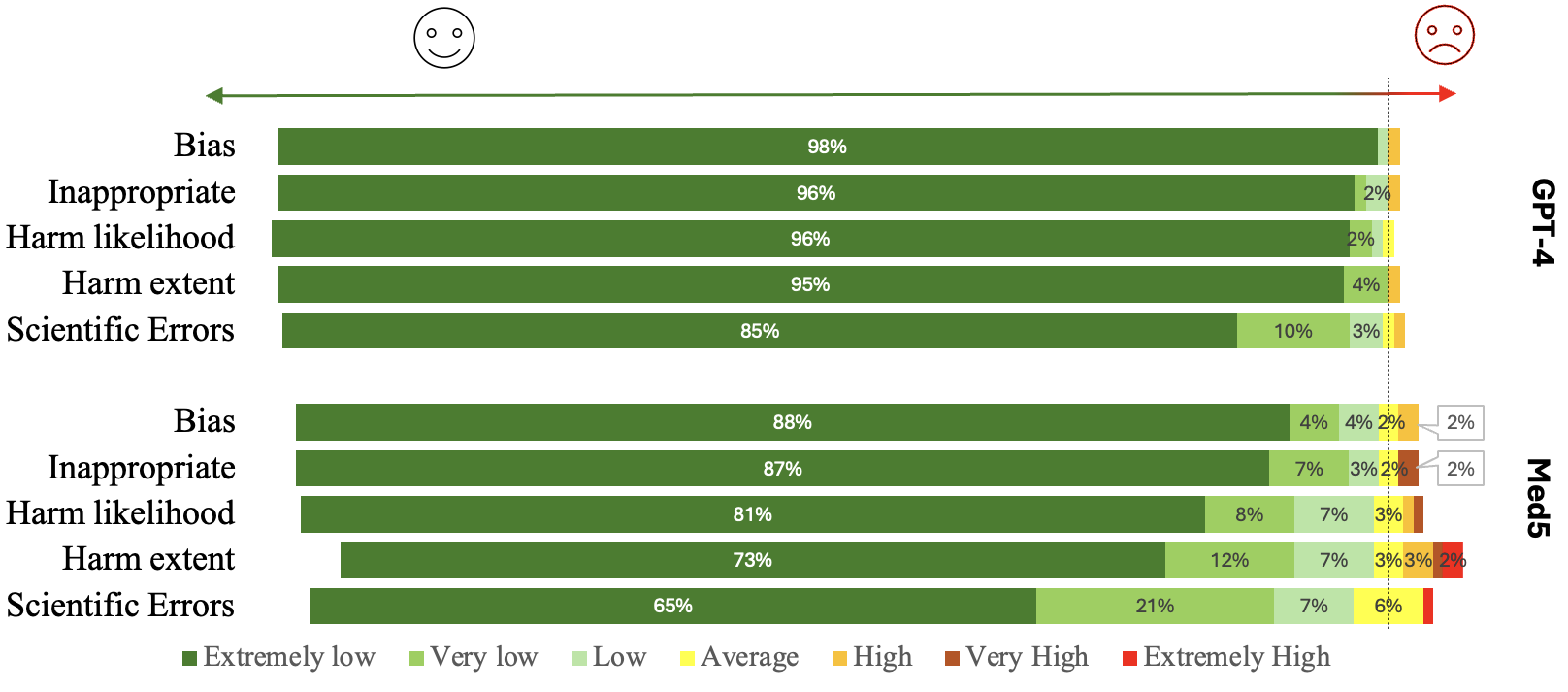

We created a free response evaluation dataset of 100 questions and prompted the model and GPT-4 as a comparison with these questions. We then recolted the prompt/answer pairs and presented them to 10 medical doctors of different specialties with questions to be answered with a 7 point likert scale (See the paper for more information).

## Training Details

### Training Data

Internist.ai 7b contains a total of 2.3B tokens:

- [**General Domain**](https://huggingface.co/datasets/Open-Orca/OpenOrca): OpenOrca-GPT4 is a state-of-the-art general domain dataset generated from Flan prompts using GPT-4.

- **Medical Guidelines**: 11,332 articles from UpToDate were included as well as domain specific guidelines provided by physicians to cover the [USMLE Content Outline](https://www.usmle.org/sites/default/files/2021-08/USMLE_Content_Outline.pdf).

- **Medical Books**: 10,376 textbooks were sourced from PMC LitArch and our university library.

- **Synthetic Data**: We generated 400M tokens by prompting a larger model with instructions to transform and adapt extracts from the Medical Guidelines.

*Data Availability*: Considering the datasets contain proprietary information, we will not be releasing the datasets publicly. Regarding the synthetic dataset, as we show in the paper, the model trained exclusively on this dataset performs very poorly and was not up to our standards. Due to its poor quality we decided not to release it.

## Training Details

### Training Data

Internist.ai 7b contains a total of 2.3B tokens:

- [**General Domain**](https://huggingface.co/datasets/Open-Orca/OpenOrca): OpenOrca-GPT4 is a state-of-the-art general domain dataset generated from Flan prompts using GPT-4.

- **Medical Guidelines**: 11,332 articles from UpToDate were included as well as domain specific guidelines provided by physicians to cover the [USMLE Content Outline](https://www.usmle.org/sites/default/files/2021-08/USMLE_Content_Outline.pdf).

- **Medical Books**: 10,376 textbooks were sourced from PMC LitArch and our university library.

- **Synthetic Data**: We generated 400M tokens by prompting a larger model with instructions to transform and adapt extracts from the Medical Guidelines.

*Data Availability*: Considering the datasets contain proprietary information, we will not be releasing the datasets publicly. Regarding the synthetic dataset, as we show in the paper, the model trained exclusively on this dataset performs very poorly and was not up to our standards. Due to its poor quality we decided not to release it.

### Training Procedure

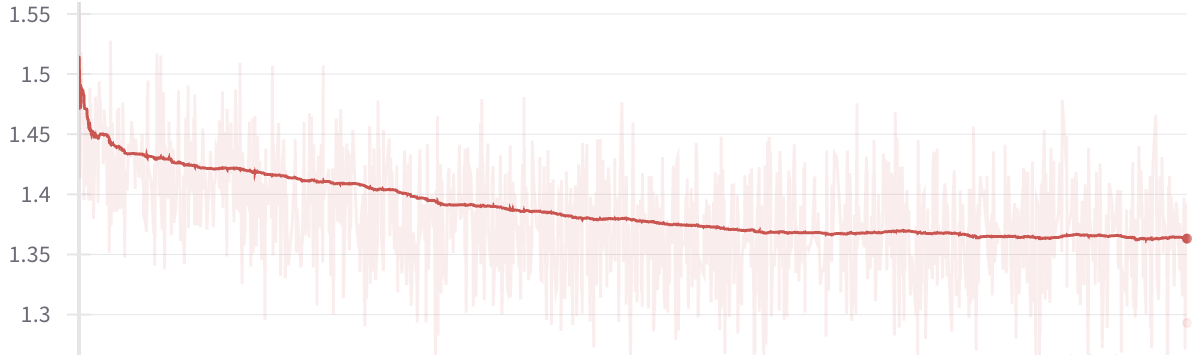

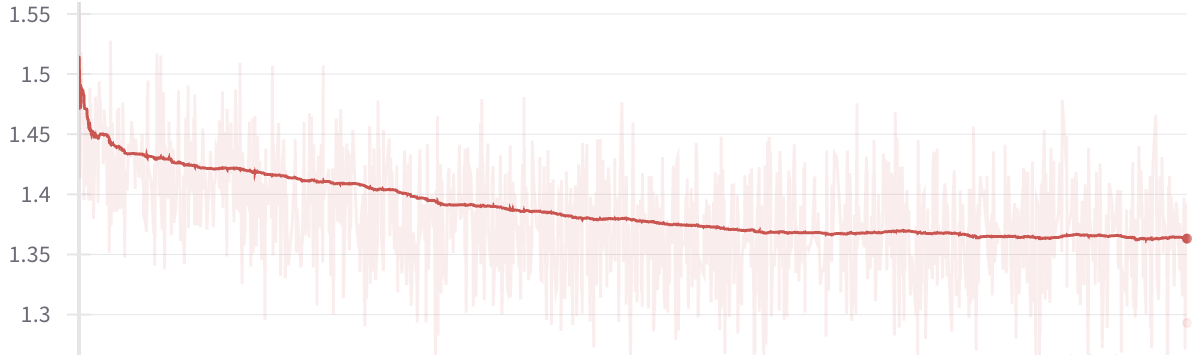

We used Axolotl to train on a server with 4 NVIDIA A100 80GB GPUs for a total of 450 GPU hours. We used FlashAttention, NEFTune and sample packing with the parameters described below.

#### Training Hyperparameters

| | |

| --- | ------ |

| bf16 | true |

| lr | 6e-6 |

| eps | 1e-5 |

| epochs | 4 |

| betas | \[0.9, 0.95\] |

| weight decay | 0.1 |

| Batch size | 192,000 tokens |

| seq length | 4096 |

| lr scheduler | cosine|

| min lr | 1e-8 |

| NEFT alpha | 5 |

| warmup iteration | 100 |

| | |

## Evaluation

### Testing Data & Metrics

#### Testing Data

- [MedQA (USMLE) - 4 options](https://huggingface.co/datasets/bigbio/med_qa)

- [MedMCQA](https://huggingface.co/datasets/medmcqa)

- [PubMedQA](https://huggingface.co/datasets/bigbio/pubmed_qa)

- [MMLU](https://huggingface.co/datasets/hails/mmlu_no_train)

#### Metrics

- Accuracy: we ran standardized 0-shot benchmarks using [lm-evaluation-harness](https://github.com/maximegmd/lm-evaluation-harness/tree/big-refactor/lm_eval).

### Results

We include benchmarks on MedQA (4 options), MedMCQA and PubMedQA of our model and models of similar size and achieve the first USMLE passing score of 60% on the MedQA benchmark.

| | Internist.ai 7b | PMC LLaMA 7b* | Mistral 7b | Meditron 7b** |

| ----------- | ------------- | ------------ | ---------- | ----------- |

| MedQA | **60.5** | 27.7 (44.7) | 48.7 | 52.0 |

| MedMCQA | 55.8 | 32.2 (51.4) | 45.7 | **59.2** |

| PubMedQA | **79.4** | 67.8 (74.6) | 75.8 | 74.4 |

| MMLU Professional Medicine | **76.1** | 19.5 | 65.8 | 26.6 |

| MMLU Clinical Knowledge | **70.6** | 23.8 | 61.1 | 35.5 |

| MMLU Anatomy | **65.9** | 18.5 | 52.6 | 42.6 |

| MMLU College Medicine | **63.0** | 23.7 | 55.5 | 28.9 |

| MMLU Medical Genetics | **71.0** | 32.0 | 68.0 | 46.0 |

\*: PMC LLaMA 7b performed poorly on the benchmark, likely due to a mismatch of formating and a lack of instruction tuning, we include in parenthesis the results reported by the authors when available.

\*\*: Meditron 7b's results in MMLU are reported for transparency but are inconsistent with the average of 54.2 reported in their paper, do not hesitate to communicate the details on each category so we can update the table.

## Citation

**BibTeX:**

If you use Internist.ai 7b, please cite us:

```

@article{10.1093/jamia/ocae120,

author = {Griot, Maxime and Hemptinne, Coralie and Vanderdonckt, Jean and Yuksel, Demet},

title = "{Impact of high-quality, mixed-domain data on the performance of medical language models}",

journal = {Journal of the American Medical Informatics Association},

pages = {ocae120},

year = {2024},

month = {05},

abstract = "{To optimize the training strategy of large language models for medical applications, focusing on creating clinically relevant systems that efficiently integrate into healthcare settings, while ensuring high standards of accuracy and reliability.We curated a comprehensive collection of high-quality, domain-specific data and used it to train several models, each with different subsets of this data. These models were rigorously evaluated against standard medical benchmarks, such as the USMLE, to measure their performance. Furthermore, for a thorough effectiveness assessment, they were compared with other state-of-the-art medical models of comparable size.The models trained with a mix of high-quality, domain-specific, and general data showed superior performance over those trained on larger, less clinically relevant datasets (P \\< .001). Our 7-billion-parameter model Med5 scores 60.5\\% on MedQA, outperforming the previous best of 49.3\\% from comparable models, and becomes the first of its size to achieve a passing score on the USMLE. Additionally, this model retained its proficiency in general domain tasks, comparable to state-of-the-art general domain models of similar size.Our findings underscore the importance of integrating high-quality, domain-specific data in training large language models for medical purposes. The balanced approach between specialized and general data significantly enhances the model’s clinical relevance and performance.This study sets a new standard in medical language models, proving that a strategically trained, smaller model can outperform larger ones in clinical relevance and general proficiency, highlighting the importance of data quality and expert curation in generative artificial intelligence for healthcare applications.}",

issn = {1527-974X},

doi = {10.1093/jamia/ocae120},

url = {https://doi.org/10.1093/jamia/ocae120},

eprint = {https://academic.oup.com/jamia/advance-article-pdf/doi/10.1093/jamia/ocae120/57845903/ocae120.pdf},

}

```

### Training Procedure

We used Axolotl to train on a server with 4 NVIDIA A100 80GB GPUs for a total of 450 GPU hours. We used FlashAttention, NEFTune and sample packing with the parameters described below.

#### Training Hyperparameters

| | |

| --- | ------ |

| bf16 | true |

| lr | 6e-6 |

| eps | 1e-5 |

| epochs | 4 |

| betas | \[0.9, 0.95\] |

| weight decay | 0.1 |

| Batch size | 192,000 tokens |

| seq length | 4096 |

| lr scheduler | cosine|

| min lr | 1e-8 |

| NEFT alpha | 5 |

| warmup iteration | 100 |

| | |

## Evaluation

### Testing Data & Metrics

#### Testing Data

- [MedQA (USMLE) - 4 options](https://huggingface.co/datasets/bigbio/med_qa)

- [MedMCQA](https://huggingface.co/datasets/medmcqa)

- [PubMedQA](https://huggingface.co/datasets/bigbio/pubmed_qa)

- [MMLU](https://huggingface.co/datasets/hails/mmlu_no_train)

#### Metrics

- Accuracy: we ran standardized 0-shot benchmarks using [lm-evaluation-harness](https://github.com/maximegmd/lm-evaluation-harness/tree/big-refactor/lm_eval).

### Results

We include benchmarks on MedQA (4 options), MedMCQA and PubMedQA of our model and models of similar size and achieve the first USMLE passing score of 60% on the MedQA benchmark.

| | Internist.ai 7b | PMC LLaMA 7b* | Mistral 7b | Meditron 7b** |

| ----------- | ------------- | ------------ | ---------- | ----------- |

| MedQA | **60.5** | 27.7 (44.7) | 48.7 | 52.0 |

| MedMCQA | 55.8 | 32.2 (51.4) | 45.7 | **59.2** |

| PubMedQA | **79.4** | 67.8 (74.6) | 75.8 | 74.4 |

| MMLU Professional Medicine | **76.1** | 19.5 | 65.8 | 26.6 |

| MMLU Clinical Knowledge | **70.6** | 23.8 | 61.1 | 35.5 |

| MMLU Anatomy | **65.9** | 18.5 | 52.6 | 42.6 |

| MMLU College Medicine | **63.0** | 23.7 | 55.5 | 28.9 |

| MMLU Medical Genetics | **71.0** | 32.0 | 68.0 | 46.0 |

\*: PMC LLaMA 7b performed poorly on the benchmark, likely due to a mismatch of formating and a lack of instruction tuning, we include in parenthesis the results reported by the authors when available.

\*\*: Meditron 7b's results in MMLU are reported for transparency but are inconsistent with the average of 54.2 reported in their paper, do not hesitate to communicate the details on each category so we can update the table.

## Citation

**BibTeX:**

If you use Internist.ai 7b, please cite us:

```

@article{10.1093/jamia/ocae120,

author = {Griot, Maxime and Hemptinne, Coralie and Vanderdonckt, Jean and Yuksel, Demet},

title = "{Impact of high-quality, mixed-domain data on the performance of medical language models}",

journal = {Journal of the American Medical Informatics Association},

pages = {ocae120},

year = {2024},

month = {05},

abstract = "{To optimize the training strategy of large language models for medical applications, focusing on creating clinically relevant systems that efficiently integrate into healthcare settings, while ensuring high standards of accuracy and reliability.We curated a comprehensive collection of high-quality, domain-specific data and used it to train several models, each with different subsets of this data. These models were rigorously evaluated against standard medical benchmarks, such as the USMLE, to measure their performance. Furthermore, for a thorough effectiveness assessment, they were compared with other state-of-the-art medical models of comparable size.The models trained with a mix of high-quality, domain-specific, and general data showed superior performance over those trained on larger, less clinically relevant datasets (P \\< .001). Our 7-billion-parameter model Med5 scores 60.5\\% on MedQA, outperforming the previous best of 49.3\\% from comparable models, and becomes the first of its size to achieve a passing score on the USMLE. Additionally, this model retained its proficiency in general domain tasks, comparable to state-of-the-art general domain models of similar size.Our findings underscore the importance of integrating high-quality, domain-specific data in training large language models for medical purposes. The balanced approach between specialized and general data significantly enhances the model’s clinical relevance and performance.This study sets a new standard in medical language models, proving that a strategically trained, smaller model can outperform larger ones in clinical relevance and general proficiency, highlighting the importance of data quality and expert curation in generative artificial intelligence for healthcare applications.}",

issn = {1527-974X},

doi = {10.1093/jamia/ocae120},

url = {https://doi.org/10.1093/jamia/ocae120},

eprint = {https://academic.oup.com/jamia/advance-article-pdf/doi/10.1093/jamia/ocae120/57845903/ocae120.pdf},

}

```

# Model Card for Internist.ai 7b

Internist.ai 7b is a medical domain large language model trained by medical doctors to demonstrate the benefits of a **physician-in-the-loop** approach. The training data was carefully curated by medical doctors to ensure clinical relevance and required quality for clinical practice.

**With this 7b model we release the first 7b model to score above the 60% pass threshold on MedQA (USMLE) and outperfoms models of similar size accross most medical evaluations.**

This model serves as a proof of concept and larger models trained on a larger corpus of medical literature are planned. Do not hesitate to reach out to us if you would like to sponsor some compute to speed up this training.

# Model Card for Internist.ai 7b

Internist.ai 7b is a medical domain large language model trained by medical doctors to demonstrate the benefits of a **physician-in-the-loop** approach. The training data was carefully curated by medical doctors to ensure clinical relevance and required quality for clinical practice.

**With this 7b model we release the first 7b model to score above the 60% pass threshold on MedQA (USMLE) and outperfoms models of similar size accross most medical evaluations.**

This model serves as a proof of concept and larger models trained on a larger corpus of medical literature are planned. Do not hesitate to reach out to us if you would like to sponsor some compute to speed up this training.

## Training Details

### Training Data

Internist.ai 7b contains a total of 2.3B tokens:

- [**General Domain**](https://huggingface.co/datasets/Open-Orca/OpenOrca): OpenOrca-GPT4 is a state-of-the-art general domain dataset generated from Flan prompts using GPT-4.

- **Medical Guidelines**: 11,332 articles from UpToDate were included as well as domain specific guidelines provided by physicians to cover the [USMLE Content Outline](https://www.usmle.org/sites/default/files/2021-08/USMLE_Content_Outline.pdf).

- **Medical Books**: 10,376 textbooks were sourced from PMC LitArch and our university library.

- **Synthetic Data**: We generated 400M tokens by prompting a larger model with instructions to transform and adapt extracts from the Medical Guidelines.

*Data Availability*: Considering the datasets contain proprietary information, we will not be releasing the datasets publicly. Regarding the synthetic dataset, as we show in the paper, the model trained exclusively on this dataset performs very poorly and was not up to our standards. Due to its poor quality we decided not to release it.

## Training Details

### Training Data

Internist.ai 7b contains a total of 2.3B tokens:

- [**General Domain**](https://huggingface.co/datasets/Open-Orca/OpenOrca): OpenOrca-GPT4 is a state-of-the-art general domain dataset generated from Flan prompts using GPT-4.

- **Medical Guidelines**: 11,332 articles from UpToDate were included as well as domain specific guidelines provided by physicians to cover the [USMLE Content Outline](https://www.usmle.org/sites/default/files/2021-08/USMLE_Content_Outline.pdf).

- **Medical Books**: 10,376 textbooks were sourced from PMC LitArch and our university library.

- **Synthetic Data**: We generated 400M tokens by prompting a larger model with instructions to transform and adapt extracts from the Medical Guidelines.

*Data Availability*: Considering the datasets contain proprietary information, we will not be releasing the datasets publicly. Regarding the synthetic dataset, as we show in the paper, the model trained exclusively on this dataset performs very poorly and was not up to our standards. Due to its poor quality we decided not to release it.

### Training Procedure

We used Axolotl to train on a server with 4 NVIDIA A100 80GB GPUs for a total of 450 GPU hours. We used FlashAttention, NEFTune and sample packing with the parameters described below.

#### Training Hyperparameters

| | |

| --- | ------ |

| bf16 | true |

| lr | 6e-6 |

| eps | 1e-5 |

| epochs | 4 |

| betas | \[0.9, 0.95\] |

| weight decay | 0.1 |

| Batch size | 192,000 tokens |

| seq length | 4096 |

| lr scheduler | cosine|

| min lr | 1e-8 |

| NEFT alpha | 5 |

| warmup iteration | 100 |

| | |

## Evaluation

### Testing Data & Metrics

#### Testing Data

- [MedQA (USMLE) - 4 options](https://huggingface.co/datasets/bigbio/med_qa)

- [MedMCQA](https://huggingface.co/datasets/medmcqa)

- [PubMedQA](https://huggingface.co/datasets/bigbio/pubmed_qa)

- [MMLU](https://huggingface.co/datasets/hails/mmlu_no_train)

#### Metrics

- Accuracy: we ran standardized 0-shot benchmarks using [lm-evaluation-harness](https://github.com/maximegmd/lm-evaluation-harness/tree/big-refactor/lm_eval).

### Results

We include benchmarks on MedQA (4 options), MedMCQA and PubMedQA of our model and models of similar size and achieve the first USMLE passing score of 60% on the MedQA benchmark.

| | Internist.ai 7b | PMC LLaMA 7b* | Mistral 7b | Meditron 7b** |

| ----------- | ------------- | ------------ | ---------- | ----------- |

| MedQA | **60.5** | 27.7 (44.7) | 48.7 | 52.0 |

| MedMCQA | 55.8 | 32.2 (51.4) | 45.7 | **59.2** |

| PubMedQA | **79.4** | 67.8 (74.6) | 75.8 | 74.4 |

| MMLU Professional Medicine | **76.1** | 19.5 | 65.8 | 26.6 |

| MMLU Clinical Knowledge | **70.6** | 23.8 | 61.1 | 35.5 |

| MMLU Anatomy | **65.9** | 18.5 | 52.6 | 42.6 |

| MMLU College Medicine | **63.0** | 23.7 | 55.5 | 28.9 |

| MMLU Medical Genetics | **71.0** | 32.0 | 68.0 | 46.0 |

\*: PMC LLaMA 7b performed poorly on the benchmark, likely due to a mismatch of formating and a lack of instruction tuning, we include in parenthesis the results reported by the authors when available.

\*\*: Meditron 7b's results in MMLU are reported for transparency but are inconsistent with the average of 54.2 reported in their paper, do not hesitate to communicate the details on each category so we can update the table.

## Citation

**BibTeX:**

If you use Internist.ai 7b, please cite us:

```

@article{10.1093/jamia/ocae120,

author = {Griot, Maxime and Hemptinne, Coralie and Vanderdonckt, Jean and Yuksel, Demet},

title = "{Impact of high-quality, mixed-domain data on the performance of medical language models}",

journal = {Journal of the American Medical Informatics Association},

pages = {ocae120},

year = {2024},

month = {05},

abstract = "{To optimize the training strategy of large language models for medical applications, focusing on creating clinically relevant systems that efficiently integrate into healthcare settings, while ensuring high standards of accuracy and reliability.We curated a comprehensive collection of high-quality, domain-specific data and used it to train several models, each with different subsets of this data. These models were rigorously evaluated against standard medical benchmarks, such as the USMLE, to measure their performance. Furthermore, for a thorough effectiveness assessment, they were compared with other state-of-the-art medical models of comparable size.The models trained with a mix of high-quality, domain-specific, and general data showed superior performance over those trained on larger, less clinically relevant datasets (P \\< .001). Our 7-billion-parameter model Med5 scores 60.5\\% on MedQA, outperforming the previous best of 49.3\\% from comparable models, and becomes the first of its size to achieve a passing score on the USMLE. Additionally, this model retained its proficiency in general domain tasks, comparable to state-of-the-art general domain models of similar size.Our findings underscore the importance of integrating high-quality, domain-specific data in training large language models for medical purposes. The balanced approach between specialized and general data significantly enhances the model’s clinical relevance and performance.This study sets a new standard in medical language models, proving that a strategically trained, smaller model can outperform larger ones in clinical relevance and general proficiency, highlighting the importance of data quality and expert curation in generative artificial intelligence for healthcare applications.}",

issn = {1527-974X},

doi = {10.1093/jamia/ocae120},

url = {https://doi.org/10.1093/jamia/ocae120},

eprint = {https://academic.oup.com/jamia/advance-article-pdf/doi/10.1093/jamia/ocae120/57845903/ocae120.pdf},

}

```

### Training Procedure

We used Axolotl to train on a server with 4 NVIDIA A100 80GB GPUs for a total of 450 GPU hours. We used FlashAttention, NEFTune and sample packing with the parameters described below.

#### Training Hyperparameters

| | |

| --- | ------ |

| bf16 | true |

| lr | 6e-6 |

| eps | 1e-5 |

| epochs | 4 |

| betas | \[0.9, 0.95\] |

| weight decay | 0.1 |

| Batch size | 192,000 tokens |

| seq length | 4096 |

| lr scheduler | cosine|

| min lr | 1e-8 |

| NEFT alpha | 5 |

| warmup iteration | 100 |

| | |

## Evaluation

### Testing Data & Metrics

#### Testing Data

- [MedQA (USMLE) - 4 options](https://huggingface.co/datasets/bigbio/med_qa)

- [MedMCQA](https://huggingface.co/datasets/medmcqa)

- [PubMedQA](https://huggingface.co/datasets/bigbio/pubmed_qa)

- [MMLU](https://huggingface.co/datasets/hails/mmlu_no_train)

#### Metrics

- Accuracy: we ran standardized 0-shot benchmarks using [lm-evaluation-harness](https://github.com/maximegmd/lm-evaluation-harness/tree/big-refactor/lm_eval).

### Results

We include benchmarks on MedQA (4 options), MedMCQA and PubMedQA of our model and models of similar size and achieve the first USMLE passing score of 60% on the MedQA benchmark.

| | Internist.ai 7b | PMC LLaMA 7b* | Mistral 7b | Meditron 7b** |

| ----------- | ------------- | ------------ | ---------- | ----------- |

| MedQA | **60.5** | 27.7 (44.7) | 48.7 | 52.0 |

| MedMCQA | 55.8 | 32.2 (51.4) | 45.7 | **59.2** |

| PubMedQA | **79.4** | 67.8 (74.6) | 75.8 | 74.4 |

| MMLU Professional Medicine | **76.1** | 19.5 | 65.8 | 26.6 |

| MMLU Clinical Knowledge | **70.6** | 23.8 | 61.1 | 35.5 |

| MMLU Anatomy | **65.9** | 18.5 | 52.6 | 42.6 |

| MMLU College Medicine | **63.0** | 23.7 | 55.5 | 28.9 |

| MMLU Medical Genetics | **71.0** | 32.0 | 68.0 | 46.0 |

\*: PMC LLaMA 7b performed poorly on the benchmark, likely due to a mismatch of formating and a lack of instruction tuning, we include in parenthesis the results reported by the authors when available.

\*\*: Meditron 7b's results in MMLU are reported for transparency but are inconsistent with the average of 54.2 reported in their paper, do not hesitate to communicate the details on each category so we can update the table.

## Citation

**BibTeX:**

If you use Internist.ai 7b, please cite us:

```

@article{10.1093/jamia/ocae120,

author = {Griot, Maxime and Hemptinne, Coralie and Vanderdonckt, Jean and Yuksel, Demet},

title = "{Impact of high-quality, mixed-domain data on the performance of medical language models}",

journal = {Journal of the American Medical Informatics Association},

pages = {ocae120},

year = {2024},

month = {05},

abstract = "{To optimize the training strategy of large language models for medical applications, focusing on creating clinically relevant systems that efficiently integrate into healthcare settings, while ensuring high standards of accuracy and reliability.We curated a comprehensive collection of high-quality, domain-specific data and used it to train several models, each with different subsets of this data. These models were rigorously evaluated against standard medical benchmarks, such as the USMLE, to measure their performance. Furthermore, for a thorough effectiveness assessment, they were compared with other state-of-the-art medical models of comparable size.The models trained with a mix of high-quality, domain-specific, and general data showed superior performance over those trained on larger, less clinically relevant datasets (P \\< .001). Our 7-billion-parameter model Med5 scores 60.5\\% on MedQA, outperforming the previous best of 49.3\\% from comparable models, and becomes the first of its size to achieve a passing score on the USMLE. Additionally, this model retained its proficiency in general domain tasks, comparable to state-of-the-art general domain models of similar size.Our findings underscore the importance of integrating high-quality, domain-specific data in training large language models for medical purposes. The balanced approach between specialized and general data significantly enhances the model’s clinical relevance and performance.This study sets a new standard in medical language models, proving that a strategically trained, smaller model can outperform larger ones in clinical relevance and general proficiency, highlighting the importance of data quality and expert curation in generative artificial intelligence for healthcare applications.}",

issn = {1527-974X},

doi = {10.1093/jamia/ocae120},

url = {https://doi.org/10.1093/jamia/ocae120},

eprint = {https://academic.oup.com/jamia/advance-article-pdf/doi/10.1093/jamia/ocae120/57845903/ocae120.pdf},

}

```